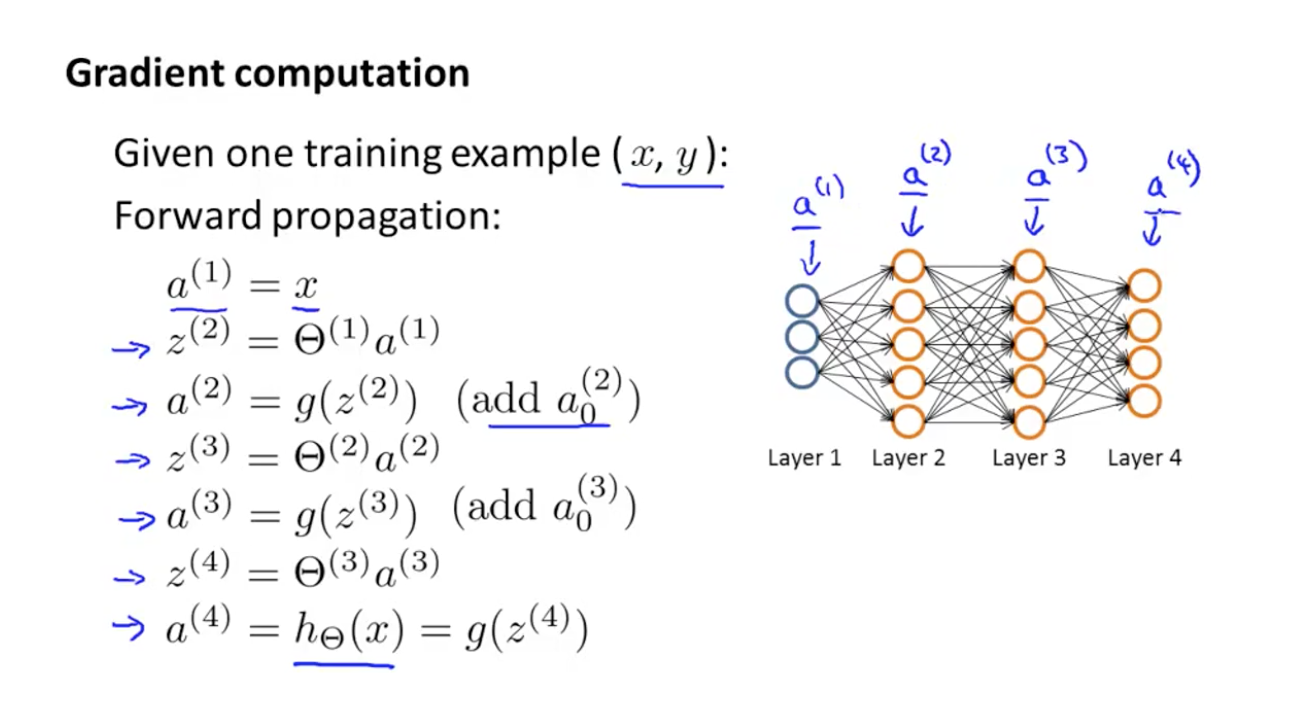

Looking carefully, you can see that all of x, z², a², z³, a³, W¹, W², b¹ and b² are missing their subscripts presented in the 4-layer network illustration above. We won’t go over the details of how activation functions work, but, if interested, I strongly recommend reading this great article. sigmoid, ReLU, tanh) and allows the network to learn complex patterns in data. Typically, this function f is non-linear (e.g. W² and W³ are the weights in layer 2 and 3 while b² and b³ are the biases in those layers.Īctivations a² and a³ are computed using an activation function f. The 4-layer neural network consists of 4 neurons for the input layer, 4 neurons for the hidden layers and 1 neuron for the output layer. I believe this would help the reader understand how backpropagation works as well as realize its importance. In this article, I would like to go over the mathematical process of training and optimizing a simple 4-layer neural network.

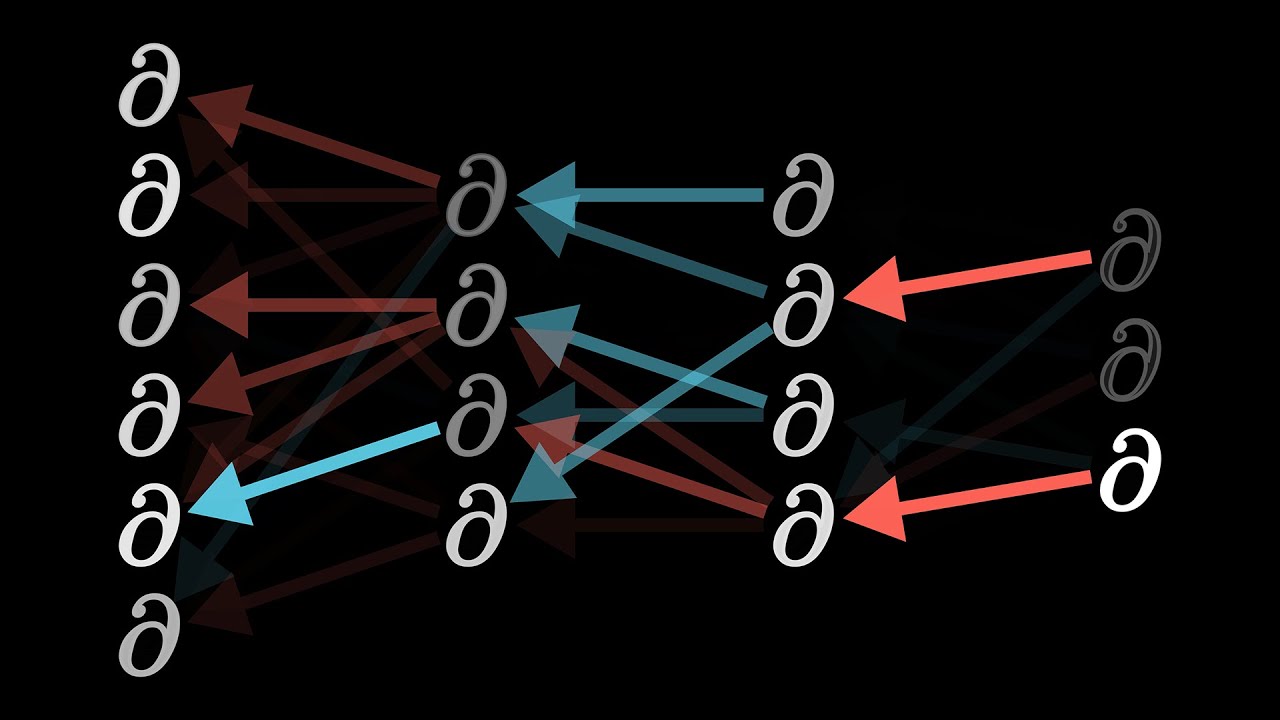

In simple terms, after each forward pass through a network, backpropagation performs a backward pass while adjusting the model’s parameters (weights and biases). The algorithm is used to effectively train a neural network through a method called chain rule. It was first introduced in 1960s and almost 30 years later (1989) popularized by Rumelhart, Hinton and Williams in a paper called “ Learning representations by back-propagating errors ”. “A man is running on a highway” - photo by Andrea Leopardi on Unsplashīackpropagation algorithm is probably the most fundamental building block in a neural network.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed